What implications must you consider when working at this scale? This lecture addresses common challenges and general best practices for scaling with your data. Module: Map. Reduce and HDFSThese tools provide the core functionality to allow you to store, process, and analyze big data. This lecture “lifts the curtain” and explains how the technology works. You’ll understand how these components fit together and build on one another to provide a scalable and powerful system. Exercise Module: Getting Started with Hadoop.

Writing Hadoop Applications in. With Hadoop Streaming, we need to write a program.

If you’d like a more hands- on experience, this is a good time to download the VM and kick the tires a bit. In this activity, using the provided instructions, you’ll get a feel for the tools and run some sample jobs. Module: The Hadoop Ecosystem.

An introduction to other projects surrounding Hadoop, which complete the greater ecosystem of available large- data processing tools. Module: The Hadoop Map. Reduce APILearn how to get started writing programs against Hadoop’s API. Module: Introduction to Map. Reduce Algorithms. Writing programs for Map.

Reduce requires analyzing problems in a new way. This lecture shows how some common functions can be expressed as part of a Map. Reduce pipeline. Exercise Module: Writing Map. Reduce Programs. Now that you’re familiar with the tools, and have some ideas about how to write a Map. Reduce program, this exercise will challenge you to perform a common task when working with big data – building an inverted index.

More importantly, it teaches you the basic skills you need to write your own, more interesting data processing jobs. Module: Hadoop Deployment. Once you understand the basics for working with Hadoop and writing Map.

Reduce applications, you’ll need to know how to get Hadoop up and running for your own processing (or at least, get your ops team pointed in the right direction). Before ending the day, we’ll make sure you understand how to deploy Hadoop on servers in your own datacenter or on Amazon’s EC2.

Running Perl script on Hadoop. CDH-5.3.0-1.cdh5.3.0.p0.30/lib/hadoop-0.20- mapreduce/contrib/streaming/hadoop. Writing An Hadoop MapReduce Program. You can also use programming languages other than Python such as Perl or Ruby. Home big dataHadoop Streaming in C# – Example Mapper and Reducer. Hadoop Streaming in C# – Example Mapper and Reducer.

DAY 2. Intermediate training focuses on importing data into Hadoop and building data processing pipelines. We’ll cover more advanced topics such as Hive and Pig and show participants how to use each effectively. Module: Augmenting Existing Systems with Hadoop.

To introduce our intermediate trainign session, we’ll take a step back and look at data systems more generally. Hadoop rarely replaces existing infrastructure, but rather enables you to do more with your data by providing a scalable batch processing system. This lecture helps you understand how it all fits together. Module: Best Practices for Data Processing Pipelines. In order for Hadoop to crunch large volumes of data, first you’ll need to get that data into Hadoop.

This lecture will help you understand how to import different types of data from various sources into Hadoop for further analysis. Exercise Module: Importing Existing Databases with Sqoop. Sqoop is a command line tool developed by Cloudera and contributed to the Hadoop project.

It provides an easy way to import data from RDBMSs and enable you to work with that data directly using Map. Reduce, Hive, or Pig. Module: Introduction to Pig. Pig is a high- level language for large- scale data analysis programs. Pig exposes many common Map. Reduce constructs in an simplified processing language, and is often used for ad- hoc analysis.

Exercise Module: Working with Pig. In this exercise, we’ll revisit some common tasks and see how you can accomplish them using Pig. Module: Introduction to Hive – A Data Warehouse for Hadoop.

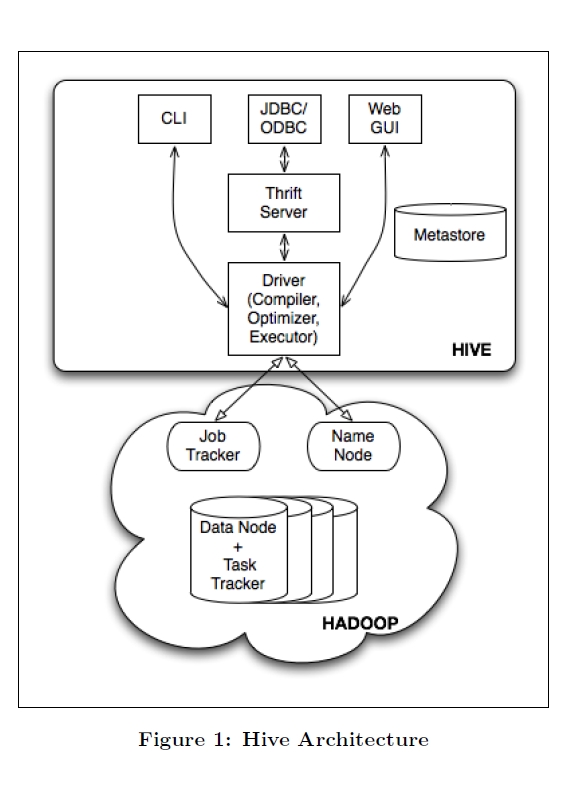

Hive is a powerful data warehousing application built on top of Hadoop which allows you to use SQL to access your data. This lecture will give an overview of Hive and the query language. Exercise Module: Working with Hive.

This exercise will show you exactly how to work with Hive. We’ll walk through importing data, creating tables, and making queries. Extended Subjects on Hive & Pig. This lecture will expose you to best practices for program design to mitigate debugging challenges, as well as local testing tools and techniques for debugging at scale.

Module: Advanced Hadoop APIIn the basic training session, you learned how to get up and running writing Hadoop Map. Reduce programs in Java. This lecture probes deeper into the API, covering custom data types and file formats, direct HDFS access, intermediate data partitioning, and other tools such as the Distributed. Cache. Module: Advanced Algorithms.

This lecture introduces some graph algorithms that can be adapted for your needs, as well as more involved examples like Page. Rank. We’ll also look at strategies for implementing joins efficiently, and compare different techniques that are appropriate to different data models. Exercise: Optimizing Map. Reduce Programs. We’ll use the Training VM to work through an example where you write a Map.

Writing your first map-reduce program on hadoop. Writing your first map-reduce program on hadoop. MapReduce and the Hadoop Distributed File System (HDFS) are now separate subprojects. This is the first time that either a Java or an open source program has won.

Reduce program and improve its performance using techniques explored earlier. This course is for Java developers who want to know how and when to use Hadoop to solve Big Data problems.

Writing Hadoop Mapreduce Program Perlindungan. Hi Allhow can i write a map reduce streaming program in perl. Now I need to use this perl program on hadoop. Writing an Hadoop MapReduce Program in Python. You can also use programming languages other than Python such as Perl or Ruby with the “technique” described in. Hadoop mapreduce coding in perl by sarf13 (Beadle.

This course assumes no prior knowledge of Hadoop, though participants should be comfortable reading and writing Java code; familiarity with Bash will help. Our Consultant is an Strategic Enterprise Architect & Process Coach with an exceptional history and experience of 1. IT projects and strategies. Key player in organizational change. Strong background in enterprise infrastructure and architecture. Experience migrating legacy operations to Java, Ruby, Perl, Python on Cloud, Solaris and Unix platforms.

Currently focusing on Web, Mobile and Web Services domain. Excellent hands- on development skills. Mobility domain experience includes i. Phone, Android, Blackberry, Titanium, Phone.

Gap. Over twelve years of success in meeting critical software engineering challenges. A known open source advocate, spreading communicating practices world- wide, for enterprise as well as ICT implementations. A speaker in various domestic and international conferences.

Enterprise Impact. Drives organizational change through high- impact enterprise strategies and practices: Improves enterprise IT strategies and practices for client engagement, project methodology, architectural governance, enterprise documentation, infrastructure migration, development team evolution, and IT support. Aligns IT with business strategies through agile methodologies that leverage business and technical architectures, information workflow, use case analysis, and risk assessment to verify fitness- for- purpose. Stabilizes ad hoc support operations by clearly defining the boundaries and responsibilities of project and operational teams, while progressively injecting fitness checks earlier into the project lifecycle.

Leads the turnaround of failed and blocked projects through progressive management techniques: Facilitates realistic project planning based upon time- boxed delivery of incremental functionality, centered on use cases, prioritized by business criticality, and driven by technical and organizational risk. Revitalizes software engineering teams through the introduction of agile best practices, including team programming, test- driven development (JUnit, RSpec, Cucumber, Selenium, SOA Webservices Testing), repeatable builds (Ant, Git, SVN), standardized environments, automated integration (Cruise. Control), performance testing (JMeter) team testing, and continuous customer collaboration. Drives the architectural evolution of mission- critical systems, architectures, platforms, and components: Product lines built upon service- oriented enterprise architectures and open source frameworks on web platform(Ruby on Rails, Spring, Struts, Zend, Hibernate) & mobility domain that are secure (Kerberos, X. XML, JSON, JMS, Web Services), manageable, and serviceable. Mission- critical systems built upon robust multi- tier hardware architectures that are 2.

Java. Script Experience: Strong experience in implementing Java. Part of early implementation of Ext. JS/j. Query with Ruby on Rails and Java based MVC framework.

Some of the projects consulted have been showcased at Tech. Crunch and Tech. Crunch Disrupt. Implementing j. Qtouch and j.

Query for mobile web application development. Conducted a program on web mobile application development for IBM Bengaluru.

Focus on best practices, performance and scalability. Corporate Consulting & Training delivered: Wipro. Accenture. Samsung. Capgemini. Intel. JP Morgan Chase. Cognizant.

RSS Feed

RSS Feed